The pxz implementation allows to use multi-core, which can speed up xz compression a bit. Compression is fairly expensive though, so faster compression algorithms are better suited if that is a concern. Minimum file size: xz is still the best when it comes to minimal file sizes. bzip2 has been made mostly obsolete by xz, and zstd is likely the best for most workflows. The question is from 2014, but in the meantime there have been some trends. 7z plus backup and restoring permissions and ACLs manually via getfacl and sefacl can be used which seems to be best option for both file archiving or system files backup because it will full preserve permissions and ACLs, has checksum, integrity test and encryption capability, only downside is that p7zip is not available everywhere Update: as tar only preserve normal permissions and not ACLs anyway, also plain. tar.xz by file-roller or tar with -z or -J options along with -preserve to compress natively with tar and preserve permissions (also alternatively. I Generally use rar or 7z for archiving normal files like documents.Īnd for archiving system files I use. I did my own benchmark on 1.1GB Linux installation vmdk image: rar =260MB comp= 85s decomp= 5sĪll compression levels on max, CPU Intel I7 3740QM, Memory 32GB 1600, source and destination on RAM disk This is the one i would use to make manual daily backups of a production environment. However, it is very long and takes a lot of memory.Īn good one where needed to minimize the impact on time AND space is gzip. If you desperatly need to spare the byte, xz at the maximum compression level (9) does the best job for text files like the kernel source.

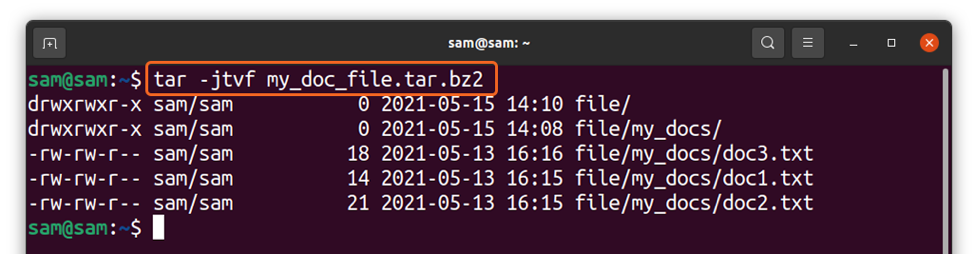

So if you really desperatly need speed, lz4 is awesome and still provides a 2.8 compression ratio. Here are a few results I extracted from this article : The compression ratio is 2.8 for lz4 and 3.7 for gzip. The fastest algorithm are by far lzop and lz4 which can produce a compression level not very far from gzip in 1.3 seconds while gzip took 8.1 second. The most size efficient formats are xz and lzma, both with the -e parameter passed. If you are concerned about speed, you could investigate alternative algorithms, such as those used by gzip or lzop.I think that this article provides very interesting results. tar.bz2 īzip2 uses a slow compression algorithm. So to use that with tar, you could for example do: $ BZIP2=-1 tar -create -bzip2 -file. This gives a convenient way to supply default arguments.

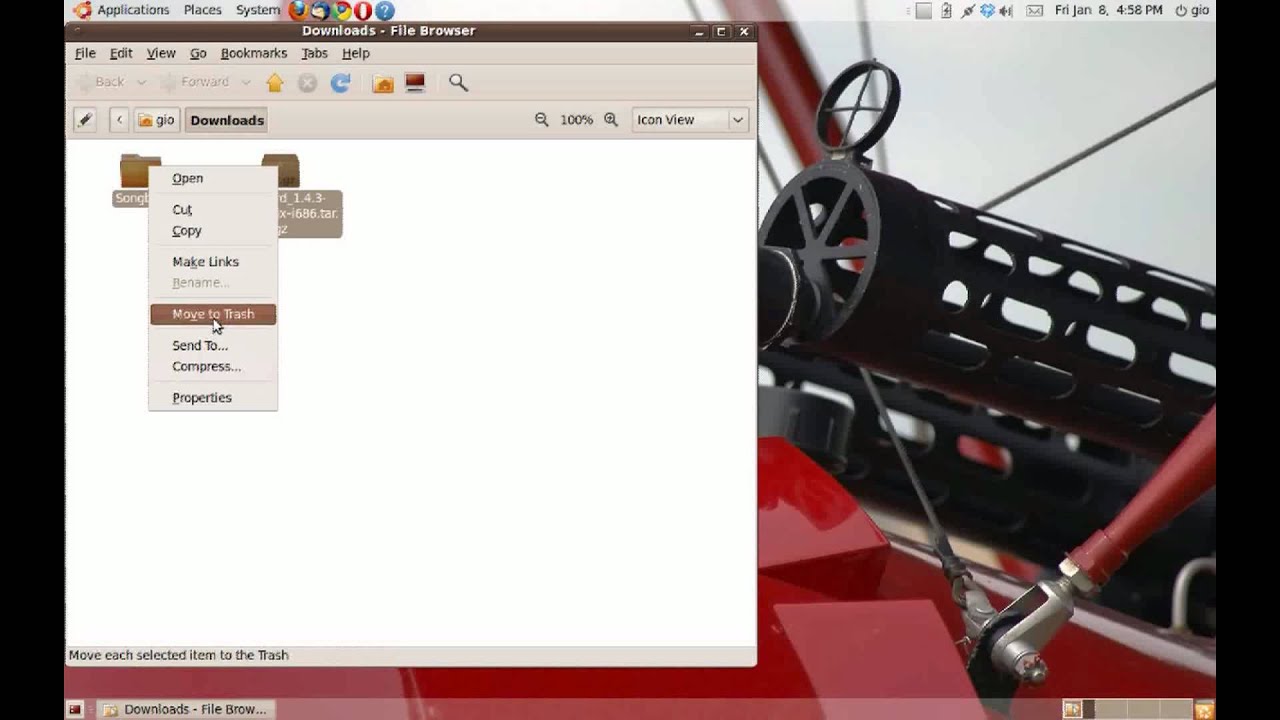

In that order, and will process them before any arguments read from theĬommand line. From the manual page bzip2(1): bzip2 will read arguments from the environment variables BZIP2 and BZIP, It is also possible to set bzip2 options through the environment variable BZIP2. tar with tar -> bzip it with bzip2 -> write it to. My favorite way, the UNlX way, is one where you use every tool independently, and combine them through pipes.

You can set bzip2 block size when using tar in a couple of ways. And -best merely selects the defaultĪs you want faster compression with less regards to compression ratio, using bzip2, you seem to want the -1 (or -fast) option. The -fast and -best aliases are primarily for GNU gzipĬompatibility. From the manual page bzip2(1): -1 (or -fast) to -9 (or -best)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed